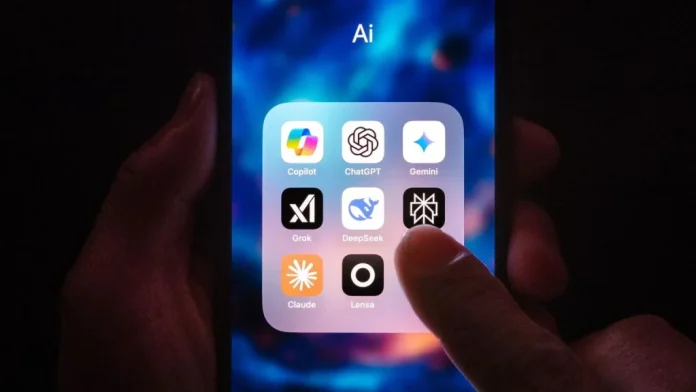

The United States government has struck new agreements with Google DeepMind, Microsoft and Elon Musk’s xAI to evaluate their artificial intelligence models before they reach the public, in a significant shift toward oversight by an administration that has largely avoided regulating the technology sector.

The Center for AI Standards and Innovation (CAISI) at the Department of Commerce’s National Institute of Standards and Technology announced the new agreements on Tuesday, saying they will enable government evaluation of AI models before they are publicly available, as well as post-deployment assessments and other research.

Previously announced partnerships with Anthropic and OpenAI, first launched in 2024, are also ongoing and have been renegotiated to reflect updated directives from the Commerce secretary and President Donald Trump’s AI Action Plan.

CAISI Director Chris Fall said independent measurement science is essential to understanding frontier AI and its national security implications, adding that the expanded industry collaborations help the centre scale its work at a critical moment.

Under the framework, developers frequently provide CAISI with models that have reduced or removed safeguards so that evaluators can probe for national security risks. Evaluators from across government may participate in assessments through the CAISI-convened TRAINS Taskforce, a group of interagency experts focused on AI national security concerns.

CAISI said it had already completed more than 40 evaluations, including on cutting-edge models not yet available to the public.

Microsoft offered public comment on the arrangement. Microsoft Chief Responsible AI Officer Natasha Crampton said CAISI offers technical, scientific and national security expertise that complements Microsoft’s own internal testing. Google declined to comment further on the agreement.

The move marks a notable policy turn. The agreement fulfils a pledge the Trump administration made in July to partner with technology companies to vet their AI models for national security risks. Trump had previously signed executive orders aimed at removing regulatory barriers to AI development, framing the technology as central to America’s global competitiveness.

The timing is significant. Anthropic’s Mythos model, which the company said is far ahead of other models in terms of cybersecurity capabilities, has sparked concern among governments, banks and utility companies over the past month, raising questions about the pace of AI development and the adequacy of existing oversight mechanisms.